Psychological Science Meets a Gullible Post-Truth World

by David G. Myers

Hope College (USA)

March 20, 2018

NOTICE: THIS WORK MAY BE PROTECTED BY COPYRIGHT

YOU ARE REQUIRED TO READ THE COPYRIGHT NOTICE AT THIS LINK BEFORE YOU READ THE FOLLOWING WORK, THAT IS AVAILABLE SOLELY FOR PRIVATE STUDY, SCHOLARSHIP OR RESEARCH PURSUANT TO 17 U.S.C. SECTION 107 AND 108. IN THE EVENT THAT THE LIBRARY DETERMINES THAT UNLAWFUL COPYING OF THIS WORK HAS OCCURRED, THE LIBRARY HAS THE RIGHT TO BLOCK THE I.P. ADDRESS AT WHICH THE UNLAWFUL COPYING APPEARED TO HAVE OCCURRED. THANK YOU FOR RESPECTING THE RIGHTS OF COPYRIGHT OWNERS.

What use are the people’s wits

who let themselves be led

by speechmakers, in crowds,

without considering

how many fools and thieves

they are among, and how few

choose the good?

-- Heraclitus, 2001 fragment 111

Highlights:

As the managing director of political messaging firm, Cambridge Analytica explained to a supposed client, things don’t “need to be true, as long as they’re believed . . . . It’s all about emotion, it’s all about emotion” (Golgowski, 2018).

When emotion trumps evidence, gullibility ensues. And like the “crafty serpent” in the creation story, said Pope Francis, fake news uses mimicry (of real news)—a “sly and dangerous form of seduction that worms its way into the heart.” One wonders if he had a certain master manipulator in mind when quoting Dostoevsky’s The Brothers Karamazov: “People who lie to themselves and listen to their own lie come to such a pass that they cannot distinguish the truth within them, or around them, and so lose all respect for themselves and for others.”...

Public gullibility ... is partly explained by the power of mere repetition. Much as mere exposure to unfamiliar stimuli breeds liking, so mere repetition can make things believable (Dechêne et al., 2010; Moons et al., 2009; Schwarz, Newman, & Leach, 2017). In elections, advertising exposure helps make an unfamiliar candidate into a familiar one, which partially explain why, in U.S. congressional elections, the candidate with the most money wins 91 percent of the time (Lowery, 2014).

Hal Arkes (1990) has called repetition’s power “scary.” Repeated lies can displace hard truths. Even repeatedly saying that a claim is false can, when discounted amid other true and false claims, lead older adults later to misremember it as true (Skurnik et al., 2005). As we forget the discounting, our lingering familiarity with a claim can also make it seem credible....

Moreover, falsehoods fly fast. On Twitter, lies have wings. In one analysis of 126,000 stories tweeted by 3 million people, falsehoods—especially false political news—“diffused significantly farther, faster, deeper, and more broadly than the truth” (Vosought, Roy, & Aral, 2018). Compared to true stories, falsehoods often are more emotionally dramatic, novel, and seemingly newsworthy. As Jonathan Swift (1710) anticipated, “Falsehood flies, and the Truth comes limping after it” (or in later renditions, “A lie can travel halfway around the world while the truth is putting on its shoes”).

Retractions of previously provided information also rarely work—people tend to remember the original story, not the retraction (Ecker 2011; Lewandowsky 2012). Courtroom attorneys understand this, which is why they will say something that might be retracted on objection, knowing the jury will remember it anyway. Better than counteracting a falsehood is providing an alternative simple story—and repeating that several times (Ecker 2011; Schwarz 2007).

Mere repetition of a statement not only increases our memory of it, but also serves to increase the ease with which it spills off our tongue. And with this increased fluency comes increased believability (McGlone & Tofighbakhsh, 2000). Other factors, such as rhyming, further increase fluency and believability. “Haste makes waste” says nothing more than “rushing causes mistakes,” but it seems more true. What makes for fluency (familiarity, rhyming) also makes for believability. O. J. Simpson’s attorney understood this when crafting his linguistic slam dunk: “If [the glove] doesn’t fit, you must acquit.”

In his astonishingly perceptive Novum Organuum, published in 1620, Francis Bacon anticipated the modern science of gullibility by identifying “idols” or fallacies of the human mind. Consider, for example, his description of what today’s psychological scientists know as the availability heuristic—the human tendency to estimate the commonality of an event based on its mental availability (often influenced by its vividness or distinctiveness):The human understanding is most excited by that which strikes and enters the mind at once and suddenly, and by which the imagination is immediately filled and inflated. It then begins almost imperceptibly to conceive and suppose that everything is similar to the few objects which have taken possession of the mind.

As Gordon Allport (1954, p. 9) said, “Given a thimbleful of [dramatic] facts we rush to make generalizations as large as a tub.”...

Bacon’s human fallacies also included our tendency to welcome information that supports our views, and to discount what does not:The human understanding, when any proposition has been once laid down (either from general admission and belief, or from the pleasure it affords), forces everything else to add fresh support and confirmation....

Again, Bacon foresaw the point: “The human understanding is no dry light, but receives an infusion from the will and affections. . . . For what a man had rather were true he more readily believes.”...

Human intuition has powers, but also perils. “The first principle,” said physicist Richard Feynman (1974), “is that you must not fool yourself—and you are the easiest person to fool.” In hundreds of experiments, people have overrated their eyewitness recollections, their interviewee assessments, and their stock-picking talents. Often we misjudge reality, and then we display belief perseverance when facing disconfirming information. As one unknown wag said, “It’s easier to fool people than to convince them they have been fooled.” For this gullibility, our statistical intuition is partly to blame....

“The human understanding,” said Bacon, is “prone to suppose the existence of more order and regularity in the world than it finds.” In our eagerness to make sense of our world, we see patterns. People may perceive a face on the Moon, hear Satanic messages in music played backward, or perceive Jesus’ image on a grilled cheese sandwich. It is one of the curious facts of life that even in random data, we often find order (Falk 2009; Nickerson, 2002, 2005)....

As determined pattern-seekers, we therefore sometimes fool ourselves. We see illusory correlations. We perceive causal links where there are none. We may even make sense out of nonsense, by believing that astrological predictions predict the future, that gambling strategies can defy chance, or that superstitious rituals will trigger good luck. As Pascal recognized, “All superstition is much the same . . . deluded believers observe events which are fulfilled, but neglect and pass over their failure, though it be much more common.”...

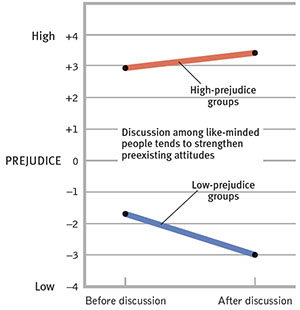

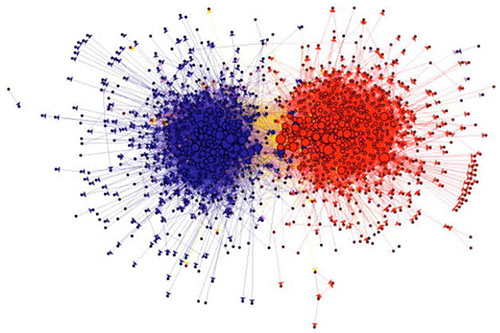

Human gullibility feeds on fake news, mere repetition, vivid anecdotes, self-confirming assessments, self-justification, and statistical misinformation, and is then further amplified as people network with like-minded others. In one of my early experiments with George Bishop, high- and low-prejudice high school students were grouped with kindred spirits for discussion of racial issues, such as a case of property rights clashing with open housing. Our finding, and that of many other experiments since, was that like minds polarize (Figure 5; Myers & Bishop, 1970). Separation + conversation [leads to] polarization....

Within this echo-chamber of the like-minded, group polarization happens. Therefore, what begins as gullibility may become toxic. Views become more extreme. Suspicion may escalate into obsession. Disagreements with the other tribe can intensify to demonization. Disapproval may inflate to loathing....

The result of gullibility-producing biases and polarization is overconfidence in one’s own wisdom. Such overconfidence—what researchers have called cognitive conceit—comes naturally. For example, when people’s answers to factual questions—“Which is longer, the Panama or the Suez Canal?” “Is absinthe a liqueur or a precious stone?”—are 60 percent of the time correct, they will typically feel 75 percent confident (Fischhoff 1977; Metcalfe, 1998).

Overconfidence—the bias that Daniel Kahneman (2015), if given a magic wand, would most like to eliminate—feeds political misjudgment. Philip Tetlock (1998, 2005) gathered 27,000+ expert predictions of world events, such as whether Quebec would separate from Canada, or the future of South Africa. His finding: Like stock brokers, gamblers, and everyday citizens, they were more confident than correct. The experts’ predictions, made with 80 percent confidence on average, were right less than 40 percent of the time.

Citizens with a shallow understanding of complex proposals, such as cap-and-trade or a flat tax, may nevertheless express strong views. As the now-famous Dunning-Krueger effect reminds us, incompetence can ironically feed overconfidence (Krueger & Dunning, 1999). The less people know, the less aware they are of their own ignorance and the more definite they may sound. Asking them to explain the details of these policies exposes them to their own ignorance, which often leads them to express more moderate views (Fernbach 2013). “No one can see his own errors,” wrote the Psalmist (19:12, GNB). But to confront one’s own ignorance is to become wiser....

Science encourages a marriage of open curiosity with skepticism. “If you are only skeptical,” noted Carl Sagan (1987), “then no new ideas make it through to you.” But a smart mind also restrains gullibility by thinking critically. It asks, “What do you mean” and “How do you know”? “Openness to new ideas, combined with the most rigorous, skeptical scrutiny of all ideas, sifts the wheat from the chaff,” Sagan (1996, p. 31) added. Education is an antidote to what Sagan (1996, p. 25) feared—a future for his grandchildren in which “our critical faculties in decline, unable to distinguish between what feels good and what’s true, we slide, almost without noticing, back into superstition and darkness.” Happily, education works. It can train people to recognize how errors and biases creep into their thinking (Nisbett, 2015; Nisbett & Ross, 1980). It can engage analytic thinking: “Activate misconceptions and then explicitly refute them,” advise Alan Bensley and Scott Lilienfeld (2017; see also Chan et al., 2017). It can harness the powers of repetition, availability, and the like to teach true information (Schwarz 2017). And thus, at the end of the day, it can and does predict decreased gullible acceptance of conspiracy theories (van Prooijen, 2017)....

Truth matters.

-- Psychological Science Meets a Gullible Post-Truth World, by David G. Myers

Abstract

For us researcher-educators, the spread of misinformation is troubling. In the United States, for example, we feel distressed when public understandings radically diverge from reality—when voters believe, contrary to evidence, that crime is rising, that new immigrants are often criminals, that under Obama unemployment rose, and that climate change is a hoax.

Such gullibility crosses partisan lines. Most U.S. Democrats wrongly believed inflation had risen under Republican president Ronald Reagan. And most Republicans believed that taxes and unemployment had increased under Democratic president Barack Obama.

Some misinformation is intentional fake news—“lies in the guise of news.” But social-cognitive dynamics also feed gullibility. There is persuasive power to mere repetition, the availability heuristic, confirmation bias, self-justification, statistical illiteracy, group polarization, and overconfidence. And there is counteracting, truth-supportive power to evidence-based scientific scrutiny, education into critical thinking, and the religious mandate for humility.

“Trust me, Wilbur. People are very gullible.

They’ll believe anything they see in print.”

― E.B. White, Charlotte’s Web

Gullibility, the great enemy of wisdom, poisons and polarizes today’s public life. We live, declared the 2016 Oxford Dictionary with their word of the year, in a “post-truth” age. The Collins Dictionary seemingly concurred, by naming “fake news”—false information disseminated under the guise of news—its 2017 word of the year. “Is Truth Dead?,” pondered a 2017 TIME cover, set on a stark black background. And in 2018, the Rand Corporation offered a 326 page report on Truth Decay, exploring “the diminishing role of facts and analysis” in public life. But then some, such as Dilbert creator Scott Adams, an admirer of Donald Trump persuasion tactics, see opportunity, as conveyed by the title of his Win Bigly: Persuasion in a World Where Facts Don’t Matter.

In the United States, concerns for citizen gullibility cross party lines. From the Democratic Party side, Senator Al Franken (2017) used his parting Senate address to warn that “We’re losing the war for truth” and Hillary Clinton (2018) agreed: “We are in the midst of war on truth, facts and reason.” In his farewell address, President Obama (2017) warned that a “threat to democracy” was growing from the lack of a “common baseline of facts” and from underappreciating “that science and reason matter.” We have become, he lamented, “so secure in our bubbles that we start accepting only information, whether it’s true or not, that fits our opinions, instead of basing our opinions on the evidence that is out there.”

His one-time opponent, Republican Senator John McCain (2017) expressed comparable alarm about “the growing inability, and even unwillingness, to separate truth from lies.” McCain’s fellow Arizona Republican colleague, Senator Jeff Flake (2018), concurred: “2017 was a year which saw the truth—objective, empirical, evidence-based truth—more battered and abused than at any time in the history of our country.”

Concerns about gullibility and misinformation extend beyond political partisans. Is eating genetically modified (GM) foods safe? Yes, say 37 percent of U.S. adults, and 88 percent of 3,447 American Association for the Advance of Science members—both in Pew Research Center surveys (Funk & Rainie, 2015). Is climate change “mostly due to human activity?” Yes, say 50 percent of U.S. adults and 97 percent of climate experts (Cook et al., 2016).

“This is not about Republicans versus Democrats,” observed National Institutes of Health former director Harold Varmus (2017). “It is about a more fundamental divide, between those who believe in evidence . . . and those who adhere unflinchingly to dogma.” And that divide is hugely important, reflected British historian Simon Schama (2017): “Indifference about the distinction between truth and lies is the precondition of fascism.”

Gullibility and Misinformation Writ Large: The U.S. Example

In Enlightenment Now, Steven Pinker (2018) reminds us that human gullibility is longstanding: Unlike our medieval ancestors, few folks today “believe in werewolves, unicorns, witches, alchemy, astrology, bloodletting, miasmas, animal sacrifice, the divine right of kings, or supernatural omens in rainbows and eclipses.” Yet gullibility endures. Its enduring extent and impact—and the impetus for this symposium—appear in Americans’ striking misperceptions of social reality, with people’s beliefs often divorced from facts. “Between the idea/and the reality/ . . . falls the shadow” (T. S. Eliot). Some examples:

Perception: Crime is rising.

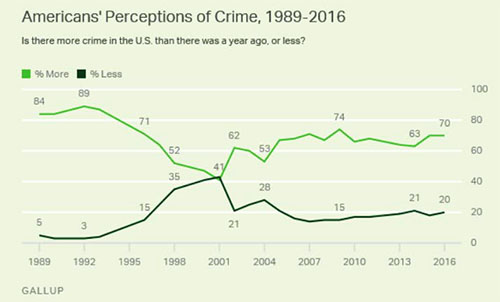

“The murder rate in our country is the highest it’s been in 47 years,” said Donald Trump (2017) shortly after his inauguration. Rising crime “is a dangerous permanent trend,” echoed his Attorney General, Jeff Sessions (2017)—both amplifying public fears that had helped put them in office. And most Americans nod their heads in agreement. Each year, right up to the present, 7 in 10 Americans have told Gallup they believe that the U.S. has suffered more crime than in the previous year (Figure 1, from Swift, 2016).

Reality: Crime is falling.

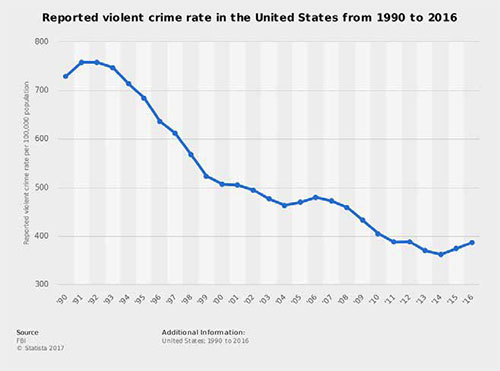

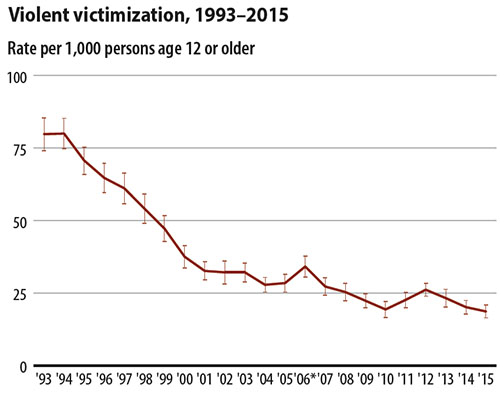

But FBI violent crime data (aggregated from crimes reported to local law enforcement) reveals an alternative (actual) reality. Violent crime has plummeted since the early 1990s (2017; Figure 2). This reality of decreasing crime is confirmed in people’s self-reports for the Bureau of Justice Statistics’ National Crime Victimization Surveys (2016; Figure 3). Moreover, property crime rates and reports have similarly declined. Ergo, belief and fact have traveled in opposite directions. And when fear and fact conflict, fearmongering often wins—a phenomenon long ago recognized by Lord Chesterfield (1693–1773): “The best way to compel weak-minded people to adopt our opinion, is to frighten them from all others, by magnifying their danger.”

Perception: Many immigrants are criminals.

“On the issue of crime,” a Gallup survey (McCarthy, 2017) reveals, “Americans are five times more likely to say immigrants make the situation worse rather than better (45% to 9%, respectively).” The National Academy of Sciences (2015) reports that this perception of crime-prone immigrants “is perpetuated by ‘issue entrepreneurs’ who promote the immigrant-crime connection in order to drive restrictionist immigration policy.”

Horrific rare incidents feed the narrative, as in the oft retold story of the Mexican national killing a young woman in San Francisco. Donald Trump’s (2015) words epitomized the perception: “When Mexico sends its people . . . they’re bringing drugs. They’re bringing crime. They’re rapists.” A January, 2018 Trump campaign ad extended this immigrants-as-killers theme with images of an illegal-immigrant murderer while a narrator referred to “evil, illegal immigrants who commit violent crimes,” noting that “Democrats who stand in our way will be complicit in every murder committed by illegal immigrants” (DonaldJTrump.com, 2018). His 2018 State of the Union address focused on the teary parents of two daughters said to have been murdered by a gang with illegal immigrant members. “If we don’t get rid of these loopholes where killers are allowed to come into our country and continue to kill … if we don’t change it, let’s have a shutdown,” Trump (2018) said two weeks later.

Reality: Immigrants are not crime-prone.

Immigrants who are poor and less educated may fit our image of criminals. Yet some studies find that, compared with native-born Americans, immigrants commit less violent crime (Butcher & Piehl, 2007; Riley, 2015). “Immigrants are less likely than the native-born to commit crimes,” confirms a National Academy of Sciences report (2015). After analyzing incarceration rates, the conservative Cato Institute (2017) confirmed that “immigrants are less likely to be incarcerated than natives relative to their shares of the population. Even illegal immigrants are less likely to be incarcerated than native-born Americans.” Noncitizens are reportedly 7 percent of the U.S. population and 6 percent of state and federal prisoners (KFF, 2018; Rizzo, 2018). Moreover, as the number of unauthorized immigrants has tripled since 1990 (Krogstad et al., 2017), the crime rate, as we have seen, plummeted.

Perception: Unemployment worsened during the Obama presidency.

In his presidential-bid announcement speech, Trump (2015) declared that “Our real unemployment is anywhere from 18 to 20 percent.” His voters heard and believed the repeated message, with 67 percent of them telling Public Policy Polling (2016) that unemployment had increased during the Obama years.

Reality: Following the recession-era doldrums that carried into Obama’s first year, unemployment steadily and substantially dropped (Figure 4; BLS, 2017).

By the time he left office, unemployment was down to 4.9 percent and some industries were facing a worker shortage.

Perception: The stock market fell during the Obama presidency.

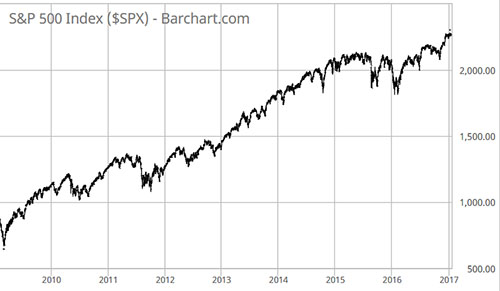

In the same Public Policy survey, Trump supporters were equally divided on whether the stock market had risen or fallen during the Obama years (4 in 10 believed each, with the remainder being unsure).

Reality: The stock market (S&P 500) nearly tripled during the Obama years.

These recent U.S. examples have a partisan tinge. And it’s true that several analyses found that the top fake news stories of the recent U.S. election, some planted by Russians, were similarly partisan (Hachman, 2017; Lee, 2016). Thus, all evidence to the contrary, President Obama finished his time in office with 42 percent of Republicans still believing he was born in Kenya, making him ineligible to have been president (Zorn, 2017). And in 2018, a conspiracy theory flourished that the FBI harbored a dark secret society that was plotting against the Trump presidency (Cillizza, 2018).

Much gullibility is not so overtly partisan (NASA faked the moon landing; crashed UFO spacecraft are stored at Nevada’s Area 51; the Holocaust is a myth). Some bias is fostered by social scientists’ eagerness to believe claims that suit their (mostly) progressive values (Crawford & Jussim, 2018). And much political bias is bipartisan. Peter Ditto and his colleagues (2015, 2018) meta-analyzed the political bias literature and “found clear evidence of partisan bias in both liberals and conservatives, and at virtually identical levels.” Thus, both Democrats and Republicans tend to believe that, when their party holds the presidency, the president cannot control gas prices; but when the opposing party is in power they believe the president can do so (Vedantam, 2012). Or consider Democratic partisan bias in Larry Bartels’ analysis (reported by FiveThirtyEight.com) of

a 1988 survey that asked “Would you say that compared to 1980, inflation has gotten better, stayed about the same, or gotten worse?” Amazingly, over half of the self-identified strong Democrats in the survey said that inflation had gotten worse and only 8% thought it had gotten much better, even though the actual inflation rate dropped from 13% to 4% during Reagan’s eight years in office (Gelman, 2009).

Other research teams have confirmed mirror image bias (Brandt, 2017; Chambers, Schlenker & Collisson, 2013; Crawford, Kay, & Duke, 2015). Whatever supports our views, we tend to believe; whatever contradicts our views, we tend to dismiss. This humbling finding is a reminder to us all of how easy it is (paraphrasing Jesus) to “see the speck in our neighbor’s eye” while not noticing the sometimes bigger speck in our own.

Explaining Gullibility and Misinformation

“When people are bewildered they tend to become credulous,” warned Calvin Coolidge (1930). In a world of bewildering change, what explains the power of master manipulators and the striking embrace of false information regarding crime, immigration, the economy, Obama’s birth, and various conspiracy theories?

Fake News

Some credulity feeds on plain fake news—what Nicholas Kristof (2016) called “lies in the guise of news” as he described how Macedonian teens built fake websites to attract links and advertising dollars. Other times, fake news is motivated by politics rather than profit. France, Britain, and the United States, among other countries, have all accused Russia of aiming to sway public opinion and elections with legitimate-looking websites that tout falsehoods. Hence, shortly after the 2016 election, Barack Obama (2016) warned that “If we can’t discriminate between serious arguments and propaganda, then we have problems.” Facebook has taken steps to help identify fake news posts. But with new technology enabling hackers to place a head on another’s body, or to manipulate one’s words, tomorrows fake political videos may be a large problem (Farrell & Perlstein, 2018).

In the United States, considerable fake news has targeted Democrats, as with one chain e-mail that was “distinguished by its longevity and implausibility” (PolitiFact, 2009). Democratic House Speaker

Nancy Pelosi wants to put a Windfall Tax on all stock market profits (including Retirement fund, 401Ks and Mutual Funds!) Alas, it is true—all to help the 12 Million Illegal Immigrants and other unemployed minorities!

“Given the number of times we’ve been asked about this particular bit of bunk,” reported FactCheck.org (2009), “a lot of gullible people are indeed sending it on to their friends.”

But some fake news has sought to tarnish Republicans, as in reports of Donald Trump’s supposed 1998 People interview:

If I were to run, I’d run as a Republican. They’re the dumbest group of voters in the country. They believe anything on Fox News. I could lie and they’d still eat it up.

Although this interview comment never appeared in People, it became a Facebook news feed item that “just wouldn’t die” (Feldman, 2016; Snopes, 2017).

Pope Francis (2018) has deplored the infectious viral spread of fake news—“false information . . . meant to deceive and manipulate . . . by appealing to stereotypes and common social prejudices, and exploiting instantaneous emotions like anxiety, contempt, anger, and frustration.” As the managing director of political messaging firm, Cambridge Analytica explained to a supposed client, things don’t “need to be true, as long as they’re believed . . . . It’s all about emotion, it’s all about emotion” (Golgowski, 2018).

When emotion trumps evidence, gullibility ensues. And like the “crafty serpent” in the creation story, said Pope Francis, fake news uses mimicry (of real news)—a “sly and dangerous form of seduction that worms its way into the heart.” One wonders if he had a certain master manipulator in mind when quoting Dostoevsky’s The Brothers Karamazov: “People who lie to themselves and listen to their own lie come to such a pass that they cannot distinguish the truth within them, or around them, and so lose all respect for themselves and for others.”

Some fake news spreads not from demagoguery, but less maliciously from mere satire that gullible people misinterpret, as in the Borowitz headline that “Trump Threatens to Skip Remaining Debates if Hillary is There,” which Snopes (2016) felt compelled to explain was a spoof. Snopes has also felt compelled to discount other satirical reports, some from The Onion, that, for example,

• “Mike Pence said that he was disappointed in husbands and fathers for allowing women to participate in the Women’s March,”

• “The Secret Service has launched an ‘emotional protection’ unit for President Trump,” and

• “Donald Trump announced plans to convert the USS Enterprise into a ‘floating hotel and casino.’”

Mere Repetition

“Vaccines cause autism.” “Climate change is a hoax.” “Muslim terrorists pose a grave threat.” Never mind the facts—that, for example, of 230,000 murders on U.S. soil since September 11, 2001, an infinitesimal proportion—123 by 2017—were terrorist acts by Muslim Americans, with none committed by terrorists born in the seven nations covered by Donald Trump’s proposed anti-terrorist travel ban (Kristof, 2017). In 2015 and again in 2016, toddlers (with guns) killed more Americans than terrorists (Ingraham, 2016; Snopes, 2015).

Public gullibility about such myths is partly explained by the power of mere repetition. Much as mere exposure to unfamiliar stimuli breeds liking, so mere repetition can make things believable (Dechêne et al., 2010; Moons et al., 2009; Schwarz, Newman, & Leach, 2017). In elections, advertising exposure helps make an unfamiliar candidate into a familiar one, which partially explain why, in U.S. congressional elections, the candidate with the most money wins 91 percent of the time (Lowery, 2014).

Hal Arkes (1990) has called repetition’s power “scary.” Repeated lies can displace hard truths. Even repeatedly saying that a claim is false can, when discounted amid other true and false claims, lead older adults later to misremember it as true (Skurnik et al., 2005). As we forget the discounting, our lingering familiarity with a claim can also make it seem credible.

In the political realm, repeated misinformation can have a seductive influence (Bullock, 2006; Nyhan & Reifler, 2008). Recurring clichés (“Crooked Hillary”) can displace complex realities. George Orwell’s Nineteen Eighty-Four harnessed the power of repeated slogans: “Freedom is slavery.” “Ignorance is strength.” “War is peace.”

Moreover, falsehoods fly fast. On Twitter, lies have wings. In one analysis of 126,000 stories tweeted by 3 million people, falsehoods—especially false political news—“diffused significantly farther, faster, deeper, and more broadly than the truth” (Vosought, Roy, & Aral, 2018). Compared to true stories, falsehoods often are more emotionally dramatic, novel, and seemingly newsworthy. As Jonathan Swift (1710) anticipated, “Falsehood flies, and the Truth comes limping after it” (or in later renditions, “A lie can travel halfway around the world while the truth is putting on its shoes”).

Retractions of previously provided information also rarely work—people tend to remember the original story, not the retraction (Ecker 2011; Lewandowsky 2012). Courtroom attorneys understand this, which is why they will say something that might be retracted on objection, knowing the jury will remember it anyway. Better than counteracting a falsehood is providing an alternative simple story—and repeating that several times (Ecker 2011; Schwarz 2007).

Mere repetition of a statement not only increases our memory of it, but also serves to increase the ease with which it spills off our tongue. And with this increased fluency comes increased believability (McGlone & Tofighbakhsh, 2000). Other factors, such as rhyming, further increase fluency and believability. “Haste makes waste” says nothing more than “rushing causes mistakes,” but it seems more true. What makes for fluency (familiarity, rhyming) also makes for believability. O. J. Simpson’s attorney understood this when crafting his linguistic slam dunk: “If [the glove] doesn’t fit, you must acquit.”

Availability of Vivid (and Sometimes Misleading) Anecdotes

In his astonishingly perceptive Novum Organuum, published in 1620, Francis Bacon anticipated the modern science of gullibility by identifying “idols” or fallacies of the human mind. Consider, for example, his description of what today’s psychological scientists know as the availability heuristic—the human tendency to estimate the commonality of an event based on its mental availability (often influenced by its vividness or distinctiveness):

The human understanding is most excited by that which strikes and enters the mind at once and suddenly, and by which the imagination is immediately filled and inflated. It then begins almost imperceptibly to conceive and suppose that everything is similar to the few objects which have taken possession of the mind.

As Gordon Allport (1954, p. 9) said, “Given a thimbleful of [dramatic] facts we rush to make generalizations as large as a tub.” To persuade people of the perils of immigration and the need to “build the wall,” Donald Trump repeatedly told the vivid story of the previously deported homeless Mexican who fired a gun killing a San Francisco woman. (The bullet actually ricocheted off the ground, and the man was found not guilty.) The political use of dramatic anecdotes is bipartisan, as illustrated when the wrongful detaining of Australian children’s author Mem Fox at Los Angeles Airport triggered progressive’s outrage over Trump administration border policies. But with 51 million nonresident tourists entering the U.S. each year, it behooved us to remember that “the plural of anecdote is not data.” The staying power of vivid images contributes to misperception that crime has been increasing. In 2015, six of the top ten Associated Press news stories were about gruesome violence (Bornstein & Rosenberg, 2016). “If it bleeds, it leads.” Small wonder that Americans grossly overestimate their vulnerability to crime and terror.

In other ways, too, we fear the wrong things. We exhibit probability neglect as we worry about unlikely possibilities while ignoring higher probabilities. As Bacon observed, “Things which strike the sense outweigh things which do not immediately strike it, though they be more important.” Thanks to cognitively-available images of airplane crashes, we may feel more at risk in airplanes than in cars. In reality, from 2010 through 2014, U.S. travelers were nearly 2,000 times more likely to die in a car crash than on a commercial flight covering the same distance (National Safety Council, 2017). In 2017, there were no fatal commercial jet crashes anywhere in the entire world (BBC, 2018). For most air travelers, the most dangerous part of the journey is the un-scary drive to the airport.

After 9/11, as many people forsook air travel for driving, I estimated that if Americans flew 20 percent less (as airline data indicated) and instead drove half those unflown miles, we could expect an additional 800 traffic deaths in the ensuing year (Myers, 2001). Gerd Gigerenzer (2004, 2010) later checked that prediction against U.S. traffic accident data. The data confirmed an excess (compared to the prior five years) of some 1,595 deaths in the year following 9/11—people who “lost their lives on the road by trying to avoid the risk of flying.” Ergo, the terrorists appear to have killed, unnoticed, six times more people on America’s roads than they did with the 265 fatalities of those flying on those four planes.

In 2018, school shootings understandably captured attention. When an unarmed stranger wandered into the girls’ bathroom in my 2nd grade granddaughter’s school complex in the opposite corner of the country from Parkland, Florida, police surrounded the schools, helicopters flew overhead, and frightened children were ushered onto school buses overseen by guards with assault rifles. In schools elsewhere, children practiced huddling in closets during active shooter drills. Protecting children is appropriately a high priority. Yet Harvard risk expert David Ropeik (2018) calculates that the likelihood of any given school student being killed by a gun on any given day is incomprehensibly small—1 in 614,000,000—“far lower than almost any other mortality risk a kid faces, including traveling to and from school” or playing sports. Compared to the evil and emotions of a school shooting (or being eaten by a shark), “Statistics seem cold and irrelevant,” acknowledges Ropeik. But, he argues, exaggerated fears of an “extraordinarily rare risk” do their own form of harm to children’s security and well-being.

When estimating risks, reasonable people should, of course, seek data. Yet cognitive availability often predominates, as was illustrated one morning after I awoke at an airport hotel where I had been waylaid after a flight delay. The nice woman working the breakfast bar explained how, day after day, she met waylaid passengers experiencing weather problems, crew delays, and mechanical problems. Her conclusion (from her mentally available sample): Flying so often goes awry that if she needed to travel she would never fly.

Not-gullible people should likewise seek data when assessing global climate change: “Over time, are the planet air and seas warming? Are the polar ice caps melting? Are vegetation patterns changing? And should accumulating atmospheric CO2 lead us to expect such changes?” Yet thanks to the availability heuristic, dramatic weather events make us gasp, while such global data we hardly grasp. Thus, people’s recent weather experience contaminates their beliefs about the reality and threat of climate change (Kaufman 2017). People express more belief in global warming, and more willingness to donate to a global warming charity, on warmer-than-usual days than on cooler-than-usual days (Li 2011; Zaval 2014). A hot spell increases people’s worry about global warming, while a cold day reduces their concern. In one survey, 47 percent of Americans agreed that “The record snowstorms this winter in the eastern United States make me question whether global warming is occurring” (Leiserowitz 2011a). But then, after an ensuing blistering summer, 67 percent agreed that global warming helped explain the “record high summer temperatures in the U.S. in 2011” (Leiserowitz, 2011b). A tweet from Comedian Stephen Colbert (2014) gets it: “Global warming isn’t real because I was cold today! Also great news: world hunger is over because I just ate.”

Confirmation Bias and Self-Justification

Bacon’s human fallacies also included our tendency to welcome information that supports our views, and to discount what does not:

The human understanding, when any proposition has been once laid down (either from general admission and belief, or from the pleasure it affords), forces everything else to add fresh support and confirmation.

Reflecting on his experiments demonstrating this human yen to seek self-supporting evidence (the confirmation bias), Paul Wason (1981) concludes that “Ordinary people evade facts, become inconsistent or systematically defend themselves against the threat of new information relevant to the issue.” So, having formed a belief—that climate change is real (or a hoax), that gun control does (or does not) save lives, that people can (or cannot) change their sexual orientation—people selectively expose themselves to belief-supportive information. Our minds vacuum up supportive information. To believe is to see.

Confirmation bias and selective exposure give insight into the striking result of a May, 2016 Public Policy Polling survey. Among voters with a favorable view of Donald Trump (a subset of Republicans), most believed Barack Obama was Muslim rather than Christian (65 percent versus 13 percent). Among voters with an unfavorable view of Trump, the numbers were reversed (13 percent versus 64 percent). Since both can’t be right, the survey again displays gullibility writ large. And in the year after Trump’s inauguration, anti- and pro-Trump people could read reports of Trump campaign contacts with Russia and reach similarly opposite conclusions of either “collusion” or “a nothing burger.”

A sister phenomenon, self-justification, further sustains misinformation. To believe is also to justify one’s beliefs. This was dramatically evident in U.S. national surveys surrounding the Iraq war. As the war began, 4 in 5 Americans supported the war—on the assumption that Iraq had weapons of mass destruction, though only 38 percent said the war would be justified if there were no such weapons (Duffy, 2003; Newport 2003). When the war was completed without any discovery of WMDs, 58 percent now justified the war even without such weapons (Gallup, 2003). “Whether or not they find weapons of mass destruction doesn’t matter,” suggested Republican pollster Frank Luntz (2003), “because the rationale for the war changed.” As Daniel Levitin (2017, p. 14) observed in Weaponized Lies, “The brain is a very powerful self-justifying machine.”

Confirmation bias and self-justification are both driven by people’s motives. Motives matter, emphasize Stephen Lewandowsky and Klaus Oberauer (2016): “Scientific findings are rejected . . . because the science is in conflict with people’s worldviews, or political or religious opinions.” Thus, a conservative libertarian who cherishes the unregulated free market may be motivated to ignore evidence that government regulations serve the common good—that gun control saves lives, that mandated livable wages and social security support human flourishing, that future generations need climate-protecting regulations. A liberal may be likewise motivated to discount science regarding the toxicity of teen pornography exposure, the benefits of stable co-parenting, or the innovations incentivized by the free market. Again, Bacon foresaw the point: “The human understanding is no dry light, but receives an infusion from the will and affections. . . . For what a man had rather were true he more readily believes.”

Statistical Illiteracy

Our human powers of automatic information processing feed our intuition. As car mechanics and physicians accumulate experience, their intuitive expertise often allows them to quickly diagnose a problem. Chess masters, with one glance at the board, intuitively know the right move. Japanese chicken sexers use acquired pattern recognition to separate newborn pullets and cockerels with instant accuracy. And for all of us, social experience enables us, when shown but a “thin slice” of another’s behavior, to gauge their energy and warmth.

Human intuition has powers, but also perils. “The first principle,” said physicist Richard Feynman (1974), “is that you must not fool yourself—and you are the easiest person to fool.” In hundreds of experiments, people have overrated their eyewitness recollections, their interviewee assessments, and their stock-picking talents. Often we misjudge reality, and then we display belief perseverance when facing disconfirming information. As one unknown wag said, “It’s easier to fool people than to convince them they have been fooled.” For this gullibility, our statistical intuition is partly to blame.

Probability neglect.

Consider, for example, how statistical illiteracy and misinformation feed health scares (Gigerenzer, 2010). In the 1990s, the British press reported that women taking a particular contraceptive pill had a 100 percent increased risk of stroke-risking blood clots. This caused thousands of women to stop taking the pill, leading to many unwanted pregnancies and 13,000 additional abortions (which were also linked with increased blood-clot risk). A study indeed had found a 100 percent increased risk—but a nominal increase from 1 in 7000 to 2 in 7000.

In one study, Gigerenzer (2010) showed how gullibility crosses educational levels. He invited people to estimate the odds that a woman had breast cancer, given these facts: Among women in her age group, 1 percent had breast cancer. If a woman had breast cancer, the odds were 90 percent that a mammogram would show a positive result. Now imagine a woman had a positive mammogram. What is the probability that she had breast cancer? This simple question stymied even physicians, who greatly overestimated her risk.

But consider the same information framed with more transparent natural numbers: Of every 1000 women in this age group, 10 had breast cancer. Of these 10, 9 will have a positive mammogram. Among the other 990 who don’t have breast cancer, some 90 will have a false positive mammogram. So, again, what is the probability that a woman with a positive mammogram had cancer? Given the natural numbers, it becomes easier to see that among the 100 or so women receiving a positive result, only 10, or about 1 in 10, actually had breast cancer.